Collaborative filtering Algorithms

Collaborative Filtering Concept

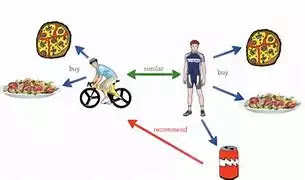

Collaborative filtering is a technique used in recommendation systems to predict a user's preferences based on their past behaviour and the behaviour of similar users. This method is predicated on the idea that people who have had similar preferences in the past would continue to have those preferences.

Here is an example of how collaborative filtering works: Suppose we have a dataset of user ratings for different movies. We want to recommend movies to users based on their past ratings. We use collaborative filtering to find similar users and recommend movies that these users have rated highly.

Collaborative filtering can be done using two approaches: user-based and item-based. In the user-based approach, the algorithm finds users who have similar ratings and recommends items that these users have rated highly. In the item-based approach, the algorithm finds items that are similar to the ones the user has rated highly and recommends these items.

Collaborative filtering Algorithm

- Define the problem and collect data.

- Split the data into training and validation sets.

- Choose a similarity metric (e.g., cosine similarity, Pearson correlation).

- Compute the similarity matrix between all pairs of users or items.

- Predict the rating of a user for an item based on the weighted average of the ratings of similar users or items.

- Evaluate the model on the validation set to estimate its performance.

- Apply the model to new data to make predictions.

Here is a sample Python code for collaborative filtering using the Surprise library:

python code

from surprise import Dataset

from surprise import Reader

from surprise import SVD

from surprise.model_selection import cross_validate

# Load dataset

reader = Reader(line_format='user item rating', sep=',', rating_scale=(1, 5))

data = Dataset.load_from_file('ratings.csv', reader=reader)

# Define the model

model = SVD()

# Run cross-validation

cross_validate(model, data, measures=['RMSE', 'MAE'], cv=5, verbose=True)

# Train the model on the full dataset

trainset = data.build_full_trainset()

model.fit(trainset)

# Make recommendations for a specific user

user_id = 1

items = [1, 2, 3, 4, 5]

for an item in items:

rating = model.predict(user_id, item).est

print(f"User {user_id} will rate item {item} with rating {rating}")

Benefits of collaborative filtering:

- Can provide personalized recommendations to users.

- Can handle large and sparse datasets.

- Can work with implicit feedback (e.g., user views, clicks) in addition to explicit ratings.

- It can improve over time as more data is collected.

Advantages of collaborative filtering:

- Can handle the "cold start" problem where new users or items have no ratings.

- Can handle diverse and complex user preferences.

- Can identify similarities between users and items that may not be apparent.

Disadvantages of collaborative filtering:

- It can suffer from the "sparsity" problem, where most users have rated only a small fraction of items.

- It can suffer from "popularity bias" where popular items receive more ratings and thus dominate recommendations.

- It can suffer from "similarity bias" where recommendations are too similar to the user's past preferences.

Main Contents (TOPICS of Machine Learning Algorithms)

CONTINUE TO (Singular Value Decomposition Algorithms)

Comments

Post a Comment